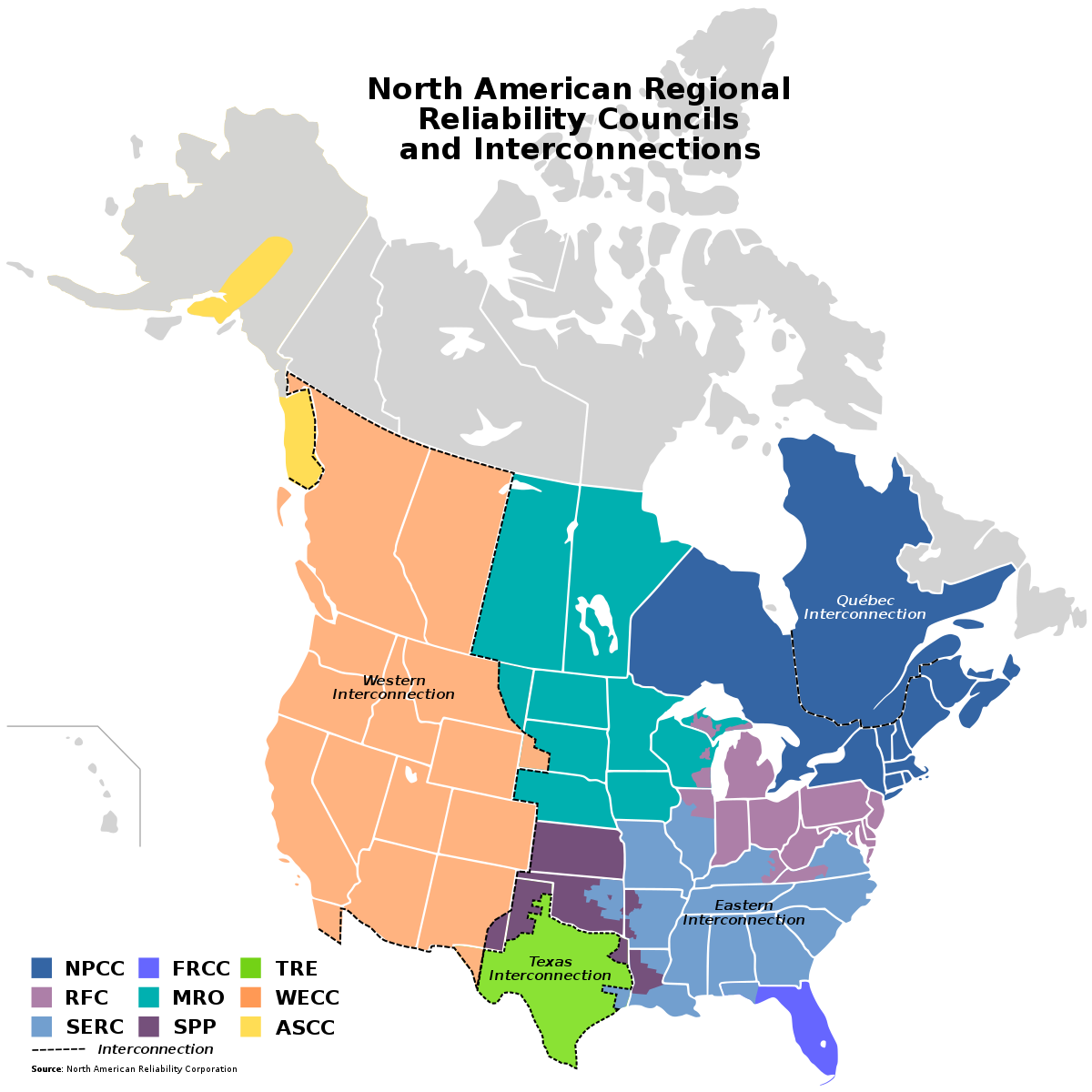

What would you do if the power grid where you live went down and there was no electricity for an extended period of time? You might want to think about that, because experts are warning that it is just a matter of time before cyberattacks successfully cripple our power grids. In fact, foreign hackers are working hard to infiltrate critical infrastructure as you read this article. As you will see below, we are extremely vulnerable, and the Russians and the Chinese have both developed highly advanced cyberwarfare capabilities. When the U.S. ends up fighting a war with Russia or China (or both simultaneously), devastating cyberattacks on our power grids will be conducted. When your community is suddenly plunged into darkness, what is your plan? (Read More...)

Which Major City Will Completely Collapse First – Los Angeles, Chicago Or New York City?

In 2024, virtually all major U.S. cities have certain things in common. First of all, if you visit the downtown area of one of our major cities you are likely to see garbage, human excrement and graffiti all over the place. As you will see below, some of our core urban areas literally look like they belong in a third world country. Most of our politicians don’t seem too concerned about doing anything to clean up all the filth, and so it shouldn’t be a surprise that rat populations are absolutely exploding all over the country. In some of our largest cities, the total rat population is numbered in the millions. Meanwhile, rampant theft, out of control violence, endless migration, predatory gangs and the worst drug crisis in the entire history of our nation have combined to create a “perfect storm” of social decay that is unlike anything that any of us have ever seen before. Millions of law-abiding citizens and countless businesses have been fleeing America’s largest cities, and property values in our core urban areas have been absolutely crashing. We really are in the early stages of a full-blown societal “collapse”, and things just keep getting worse with each passing day. (Read More...)

There Is More To This Current Bird Flu Panic Than Meets The Eye

Why are global health officials issuing such ominous warnings about the bird flu? Do they know something that the rest of us do not? H5N1 has been circulating all over the planet for several years now, and it has been the worst outbreak that the world has ever seen. Hundreds of millions of birds are already dead, and now H5N1 has been infecting mammals with alarming regularity. The good news is that so far it has not been a serious threat to humans, but could that soon change? According to the World Health Organization, the possibility that H5N1 could start spreading among humans is an “enormous concern”… (Read More...)

Why Are They Trying So Hard To Convince Us That People That Are Seeing Black-Eyed Demon Faces Have A “Disorder”?

If you came into contact with someone with a face that looked like a demon and eyes that were completely black as night, what would you do? Encounters of this nature are popping up on social media at the exact same time that the mainstream media is trying really hard to convince all of us that anyone that is seeing black-eyed demon faces has a “disorder”. In fact, if you type “demon face” into Google News, you will literally get hundreds of articles about a disorder known as prosopometamorphopsia. But this is a very, very rare disorder. At this point, less than 100 cases of PMO have ever been documented. (Read More...)

Alert! Israel Is Preparing To Hit Iran “Forcefully” And The Iranians Are Promising To Retaliate

This is the most dangerous moment that we have seen in the Middle East in modern times. As you will see below, Israel has decided to hit Iran. There is still debate about whether it should be a devastating blow or a more limited strike. But in either case, the Iranians are pledging to retaliate. In fact, the Iranians are promising to respond to any attack with overwhelming force. Needless to say, that would certainly provoke another response from Israel. The cycle would continue until someone finally decides that enough is enough. But what if neither side backs down and the conflict escalates to an unthinkable level? (Read More...)

“A Declaration Of War”: How The Conflict Between Israel And Iran Fits Into The Bigger Picture

Iran’s attack on Israel is being called “a declaration of war”, and that is precisely what it was. Shooting more than 300 drones and missiles at another country is definitely an act of war, and the Iranian parliament was loudly chanting “death to Israel” as it was happening. Many are shocked that a full-blown war between Israel and Iran is developing, but those that have been following my work are not shocked at all. In fact, this is precisely what we have been anticipating. I have been writing about a coming war between Israel and Iran for years, and in my book entitled “Lost Prophecies Of The Future Of America” I lay out what will happen in great detail. So the truth is that we have been waiting for this for a very long time and now it is here. (Read More...)

Multiple Global Pestilences Threaten To Spiral Completely Out Of Control

Do you remember the nightmares that we experienced in 2020 and 2021? Nobody wants to go through anything like that again, but right now multiple pestilences are raging all over the planet, and one or more of them could potentially spiral completely out of control. For a long time, I have been warning my readers that we have entered an era of great pestilences. Humanity’s ability to manipulate diseases greatly exceeds humanity’s ability to control diseases, and as you read this article scientists all over the world are monkeying around with some of the most dangerous bugs that humanity has ever known. That is a recipe for disaster, and it is only a matter of time before we experience a crisis far worse than anything that we went through in 2020 and 2021. (Read More...)

Waiting For The Other Shoe To Drop In The Middle East…

There has been a tremendous amount of speculation about when and how Iran will attack Israel. In the aftermath of Israel’s stunning airstrike on a building directly next to the Iranian embassy in Damascus, Iranian officials pledged that there would be a very “harsh” response. I take them at their word, and I am entirely convinced that a response is coming. But exactly when that response will happen remains a mystery as I write this article. So for now, we are waiting for the other shoe to drop. According to Jennifer Jacobs, the senior White House reporter for Bloomberg, sources have told her that an attack on Israel is imminent… (Read More...)